The problem comes from the decode function of ReadTabBatch. However the show_batch, show_results and more importantly predict methods fail when they try to rebuild a dataframe. If res.dtype is torch.float64: return res.float() # if isinstance(x, (tuple,list)) and len(x)=0: return tensor(0)Įlse torch.tensor(x, **kwargs) if isinstance(x, (tuple,list))Įlse _array2tensor(x) if isinstance(x, ndarray)Įlse _pandas2tensor(x, **kwargs) if isinstance(x, (pd.Series, pd.DataFrame))Įlse as_tensor(x, **kwargs) if hasattr(x, '_array_') or is_iter(x) We'll do so as follows: X np.concatenate( (Xtrain, Xvalid)) y np.concatenate( (ytrain, yvalid)) np.save('./data/UCR/StarLightCurves/X.npy', X) np.save('./data/UCR/StarLightCurves/y.

indexed (bool): The DataLoader will make a guess as to whether the dataset can be indexed (or is iterable. droplast (bool): If True, then the last incomplete batch is dropped. shuffle (bool): If True, then data is shuffled every time dataloader is fully read/iterated. # There was a Pytorch bug in dataloader using num_workers>0. at 1:12 oh i see, they are local files on my computer and I have just hardcoded paths to them within my code. batchsize (int): It is only provided for PyTorch compatibility. "Like `torch.as_tensor`, but handle lists too, and can pass multiple vector elements directly." Our inputs immediatly pass through a BatchSwapNoise module, based on the Porto Seguro Winning Solution which inputs random noise into our data for variability After going through the embedding matrix the 'layers' of our model include an Encoder and Decoder (shown below) which compresses our data to a 128-long vector before blowing it back up in. Return as_tensor(v, device=None, requires_grad=False, pin_memory=False) The pandas library 8 already provides excellent support for processing tabular data sets, and fastai does not attempt to replace it.

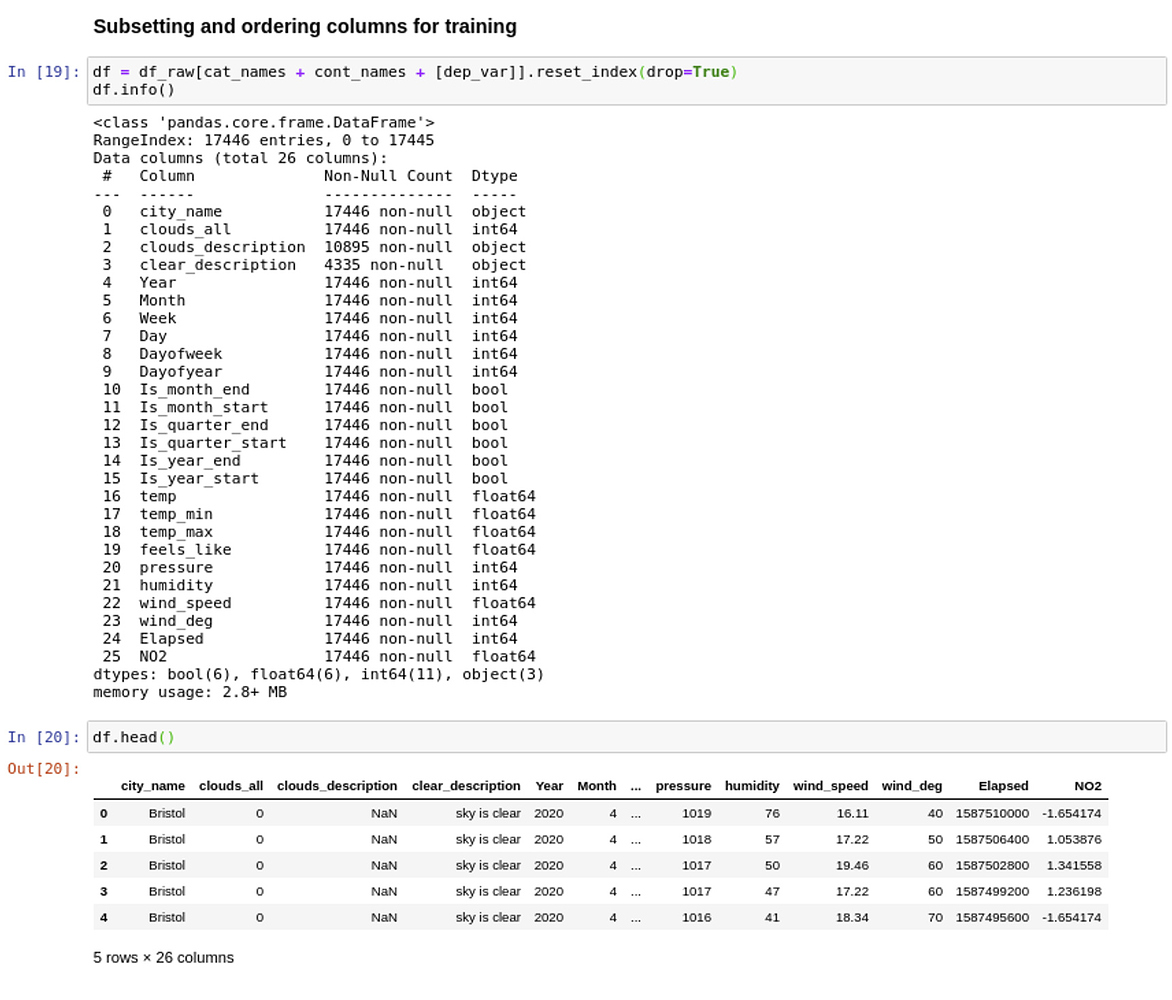

Load best model losses np.array() best np.argmin(losses. If nb_rows = 1: v = v.item() # only one row, cannot stack In this notebook, we used a basic fastai TabularLearner to generate. If (v.dtype = np.object_): # deals with arrays whose item type is itself a numpy array In python save your np array: import scipy.io as sio SciPy module to load and save mat-files m'np3Darray'np3Darray shape(5,365,10) sio.savemat('file. V = x.values # extracts the values as a numpy array Let use say you have a 2D daily data with shape (365,10) for five years saved in np array np3Darrat that will have a shape (5,365,10). "Converts pandas Dataframe or Serie into numpy array." Checkout the tabular tutorial for examples of use. I manage to get it training when I inject the following code into fastai def _pandas2tensor(x, **kwargs): fastai - Tabular data Report an issue Tabular data Helper functions to get data in a DataLoaders in the tabular application and higher class TabularDataLoaders The main class to get your data ready for model training is TabularDataLoaders and its factory methods.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed